← Blog from Guindo Design, Strategic Digital Product Design

Product onboarding design via CLI

Launching a CLI (command-line interface) is no longer the exclusive domain of engineering. Today, any product developer can generate a functional terminal tool with a simple AI prompt. But the ability to generate code doesn't equate to building a product.

Most CLIs lose their users within the first 30 seconds because nobody has thought about their onboarding. The real bottleneck is no longer the code, it's the design.

What is a CLI?

Both a CLI and an AI chat are text-based interfaces, but with a critical difference that used to be a major barrier to entry: using a terminal forces you to memorize rigid commands to operate a system. It's a "recall" interface, not a "recognition" one.

In an AI chat, the LLM acts as an interpreter, another interface (the model) that translates your human language ("fix this") into the technical instructions the system understands. Therefore, the friction with the product shifts, and it's no longer necessary to learn the command manual: the barrier to entry ceases to be technical and becomes a matter of trust and onboarding.

The fact that the technical barrier has fallen doesn't mean graphical interfaces are dead; they're simply less efficient for certain use cases. For an AI to analyze, edit, and create files on your computer, it's much more efficient to point the AI at your files than to upload your files to the AI. That's why platforms like Anthropic or OpenAI often release a CLI as a key component of their ecosystem.

- Performance and latency. Web interfaces are slow by definition (asset loading, animations, rendering waits). In the terminal, there's nothing to decorate; the data flow is direct and execution is immediate.

- Process automation. A web chatbot is designed to solve tasks one by one through conversation. A CLI allows the AI to be integrated into systematic workflows, processing hundreds of files automatically while you focus on something else.

- Context control. It is much simpler (and generates less security friction) to authorise an AI tool to look at a local folder than to force the user to manually select, package and upload sensitive information to a website.

If the connection between AI and your files is poorly designed, the product experience breaks down, no matter how intelligent the underlying language model is. The problem is that UX and product developers often ignore this area under the pretext that "it's a technical issue," a dereliction of duty that leaves engineering alone to face the danger of building powerful but unfriendly tools. A CLI is, first and foremost, a text-based user interface that requires the same hierarchy, tone, and friction management as any visual application. Engineering provides the power, but we must design the controls to prevent the user from abandoning the product within thirty seconds of opening the terminal.

What data do you need to add value?

In product design, any data we demand before demonstrating value is a wall we erect during onboarding. In the case of a CLI, the entry strategy boils down to a single question: Does your tool provide any real value before connecting to the server? This distinction is critical because it determines whether authentication should be an access barrier or an optional step for users who have already tried the product on their machines.

Side 1: Identity as a toll

If your tool relies on an external server to process user information, there's no value to be gained without identifying the user. Requiring a login on the first line is an architectural necessity, since the intelligence resides in the cloud, not locally, and the user's identity is the only link connecting their device to the system responsible for resolving the issue.

However, this is the riskiest moment for the product, as forcing the user to leave the terminal and go to the browser represents a critical break in their mental flow.

Taking someone out of the black screen is dangerous because you're launching them into an environment full of distractions (another browser tab) and you're breaking the promise of CLI immediacy. That's why onboarding success here is measured exclusively by return speed. The industry has solved that little abyss through the Device Flow (RFC 8628), a design standard where the terminal opens the browser for you and stays listening in the background. Referrals like Stripe o Claude Code they have turned what was previously a manual and error-prone process (copying and pasting alphanumeric tokens) into a mere five-second procedure: a click on the web, a quick authorisation, and the terminal comes to life automatically without the user having had to handle a single key.

At the opposite end of the spectrum is the AWS CLI, which maintains a manual configuration model (aws configure) where the user must generate and paste their access keys. Although this system is designed for security in environments where a browser is not always available and granular permission management is mandatory, for a casual user it represents a barrier to entry. AWS prioritises protocol robustness over onboarding agility.

Side 2: first value, then identity

For tools that can run autonomously, login is not a barrier to access, but an optional enhancement. Success lies in instant gratification: the user downloads the tool and verifies that it performs a useful task on their own computer before surrendering their identity.

References such as Supabase o Prisma , treat the local experience as a high-fidelity technical demo. You can spin up a full database or generate code schemas without going through a signup form. Authentication only appears organically when the user decides to take the next step: syncing a project to the cloud or purchasing managed infrastructure. On this side, product design is about removing any initial friction so that the utility is unquestionable from the first second; login is simply the final step to supercharge a tool that has already proven to you that it works.

Choosing a side: a matter of honesty

If your tool is useless without the cloud, then the first option (mandatory login) is the only honest approach. Trying to disguise this with an empty "local trial" is just wasting the user's time. Instead, if you can offer immediate utility on the user's computer, even if minimal, the local value is the hook to build the necessary trust before requiring registration.

The key is knowing how to transition between the two. Prisma is a good example of this: its strategy consists of not changing the interface, but the depth.

- If the user only wants to set up their project locally, the tool provides them with the files and allows them to work autonomously.

- Only when the user attempts to connect to a real database does the CLI prompt for authentication.

The tool is invisible while you are working locally and only raises its hand to ask for credentials when the task really requires it.

How the user connects: the logistics of "Auth"

Once the user needs to identify themselves, the CLI UX transitions to permission management. As there are no forms, the design involves choosing how to connect the terminal to the browser while minimising cognitive load. Professional tools have converged on four methods that prioritise convenience or robustness, depending on the case:

- Device Authorization Flow (context resilience). It's the solution for when the terminal and browser are not on the same machine (SSH, Docker, or remote servers). The CLI displays a code and the server waits for external authorisation. It's the standard in Vercel o railway because it is the only one that survives in environments where there is no direct connection between applications.

- Localhost callback (zero friction). The CLI captures the login automatically, like in Claude Code. It's the most seamless experience on a personal computer, but it requires the terminal and browser to talk directly, something corporate environments often block.

- Human validation. Instead of abstract codes, the terminal and the web show the same random phrase (e.g.,

enjoy-enough-outwit-winStripe uses this feature to turn a security validation into a half-second visual check. - API Keys (security and automation). The method of generating a key and pasting it manually. Although it is manual, it is the only viable one for automatic processes (CI/CD) and environments that require strict permission management or 2FA. It is the mandatory security fallback: if all else fails, the manual key always works.

Before auth: the first run also counts

The success of a CLI is decided before the first piece of data is requested. Whereas a poor design forces the user to do homework (configuring regions or keys) before seeing anything, the tools that dominate the ecosystem invert the burden: they first deliver a sample of value and then ask for registration.

When commands such as create-next-app o supabase start They generate files and spin up a server in seconds; they're not just setting up folders, they're performing a show of force. Seeing something moving in their terminal provides the user with immediate gratification, which buys their patience. At this point, logging in ceases to be a barrier and becomes a logical formality to protect or expand the work already in front of them. The design here isn't about asking permission to start, but about demonstrating that it's worth sticking around for.

Where conversion dies

Even the best flow crashes if the details are bad. On the terminal, most errors are usually UX failures disguised as engineering.

Copy-pasting keys, for example, is negligence: it forces the user to handle sensitive data which ends up exposed in the clipboard or published by mistake. Any workflow that depends on the user manually managing a long key is a factory of frustration and support tickets.

Added to this is the strategic error of demanding an account before installation. Forcing someone to navigate a website to generate credentials before the CLI demonstrates its value has a huge conversion cost. The same applies to error design: on a text-based screen, a generic «Authorization Failed» message is a dead end. If the design doesn't explain whether the problem is the system clock or a corporate proxy, the user will simply close the terminal.

Finally, we must flee the mirage of vanity metrics. Celebrating downloads in registries like npm (the public repository from which these tools are distributed) is fooling yourself. Between security scans and automated processes, the download volume is pure noise. In a text-based interface, the only real metric for success isn't how many people download the package, but how many manage to complete the first meaningful command. If you optimise for download numbers instead of onboarding success rate, you're designing blind.

"Free during the beta" is not free

In the midst of uncertainty about AI costs, the temptation to hide behind «free for now» to avoid scaring people off is a mistake. The way to manage the bubble is not through ambiguity, but through transparency of consumption. If computing is expensive, transfer it to the design and don't subsidise it: display clear usage limits or allow the user to bring their own infrastructure by connecting their own API.Bring Your Own Key).

CLI design in 2026 isn't just about moving data; it's about demonstrating that the value you deliver justifies the energy cost you consume. Developer trust isn't earned by handing out investor money, but by offering a predictable spending model from the very first command. If the user has control over the meter, the fear of pay-per-use disappears: cost ceases to be an adoption barrier and becomes just another variable in their architecture.

The design of the invisible

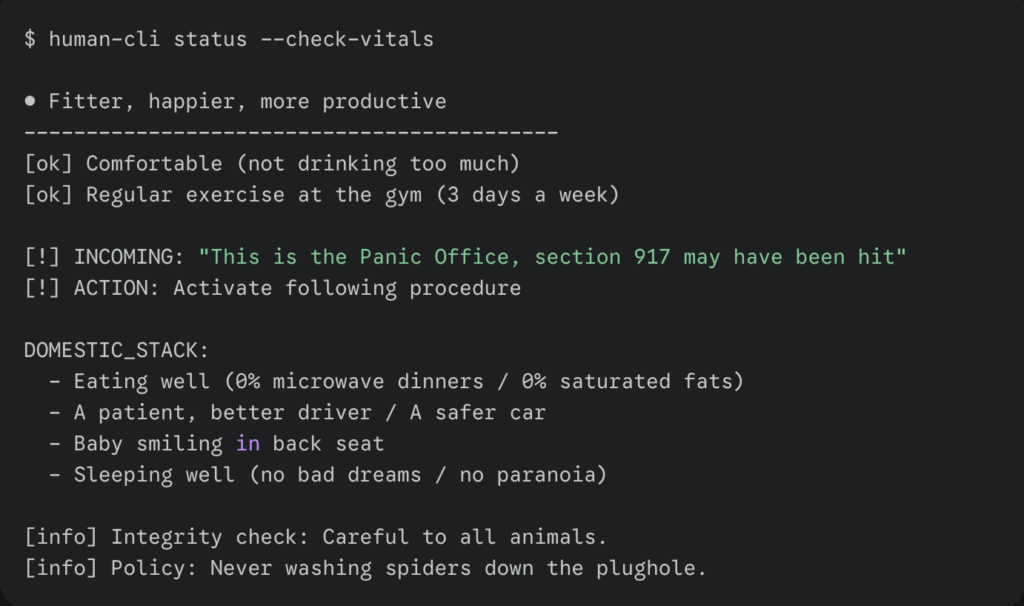

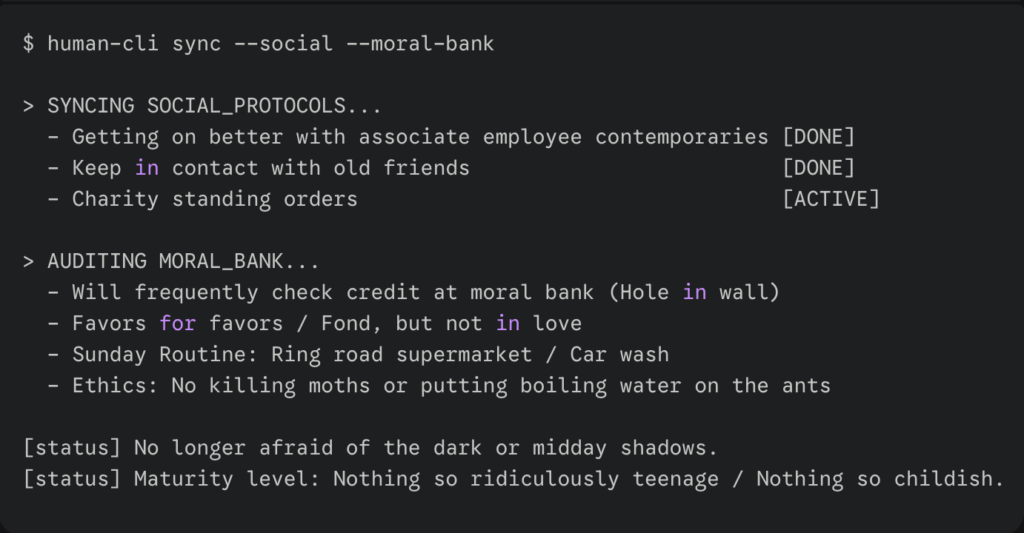

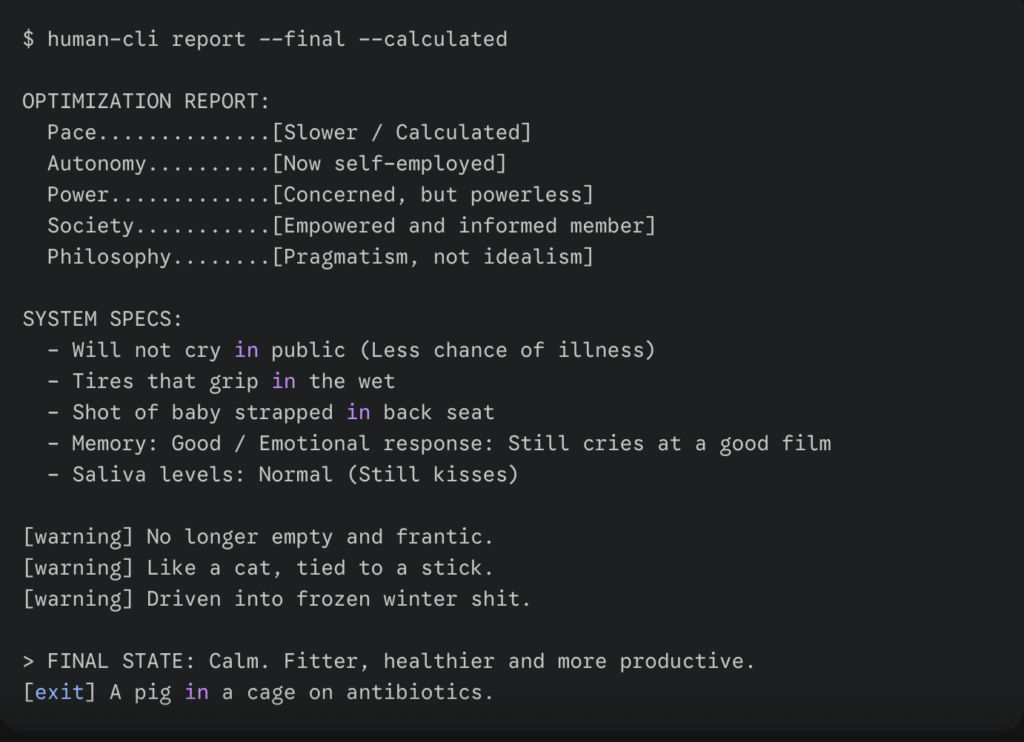

If you're coming from product UX/UI design, most of your instincts are right, but they need translating. In the terminal, there are no grids or hover states; hierarchy is temporal, not spatial. The first line of output is your headline, and the last is your call to action. Discovery doesn't live in a side menu but in a --help impeccable and with such sensible default values that the user won't need the manual to proceed.

However, the physical rules of this environment demand a distinct approach to risk management. There is no undo button here, so design consists of managing irreversibility through explicit confirmations or test modes.--dry-run. Furthermore, your interface should be bilingual: the output has to be human-readable, but machine-processable. The flag --json it's not a decorative luxury; it's the standard that allows your tool to stop being an island and become part of a larger apparatus, permitting other scripts to connect with your product without anything breaking.

Designing for the terminal is ultimately an exercise in minimalism where aesthetics never take precedence over compatibility. Respecting standards such as NO COLOUR Correctly using ANSI sequences isn't a technical detail, it's inclusive design: it ensures your tool works just as well on a modern monitor as it does in an error log or a screen reader. If you ignore this area, considering it an engineers' problem, you're letting your product die in the most important tool of today's ecosystem.

The two references to keep close are clig.dev, which is the closest thing to a manual that exists in the field, and the article «CLI for Everybody» de Adedayo Agarau, which is a plain language introduction for non-developers.