← Blog from Guindo Design, Strategic Digital Product Design

The importance of consistency in AI-based products

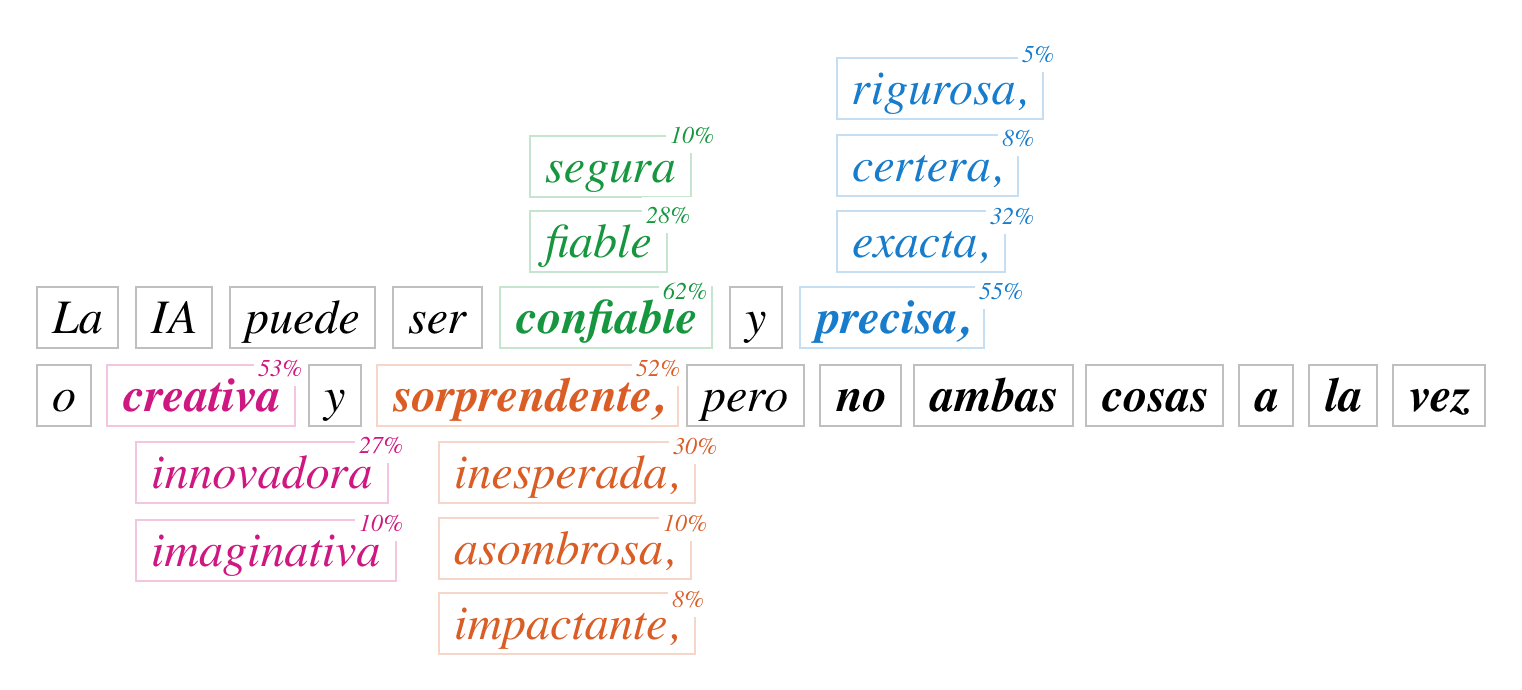

AI content generation systems are powerful, but they can also be bewildering. One of the biggest challenges when working with language models (LLMs) is the reproducibility, i.e. get the same answers with the same questions. If you try ChatGPT, you will see that sometimes it answers slightly differently even when you repeat the same query.

In theory, there are settings that should make the responses always the same; lowering the temperature to 0, or in other words, setting it to always choose the word with the highest probability. But in practice, even setting the model to “always choose the same” does not achieve total consistency. And this doesn't just happen in commercial APIs - even when running models in controlled environments, responses can vary.

This feature, implicit in language models, can sometimes be a great capability in some tools, but in productivity environments it can sometimes be frustrating or even risky for the product.

A recent technical analysis by Horace He in Thinking Machines explored why large language models (LLM) are not always consistent, even when configured to be consistent. According to the article, small internal variations during calculations can cause changing the order of operations to produce slightly different results. As a result, AI sometimes does not produce exactly the same answer. However, in simpler, more controlled calculations, the results do match, so the full explanation is a little more complex. While the technical details require specialised knowledge, the article leaves important product and user experience lessons that we can apply without going into mathematics.

Consistency breeds confidence...

Imagine a calculator that, when you type 2 + 2, sometimes gives you 4 and sometimes 3,999. Technically it is almost the same, but users would quickly lose confidence in it.

The same applies to AI-based products: if a customer asks for instructions, a legal text or a product description, and the answer changes slightly each time, this raises doubts about the reliability of the system. Consistency is especially important when, for example, a customer asks for instructions, a legal text or a product description, and the answer changes slightly each time:

- Clients need repeatable results (regulated industries or compliance documentation).

- Technical teams should debug or reproduce a problem reported by a user

- Brands want to controlling tone, voice and precision of speech in different markets.

...but creativity needs variety

On the other hand, variety is not always a mistake: sometimes it is a necessary feature.

If someone uses AI to generate marketing ideas, draft stories or design concepts, they expect a range of responses. Predictability in this case would be perceived as flat and uninspiring. Users value different things in different contexts:

- In creative tasks, randomness is perceived as playful and valuable.

- In instructional or factual tasks, randomness is perceived as unprofessional and a risk.

Transparency of the system and concessions

Sometimes results vary due to factors outside the user's control, such as system load. If 100 people use the platform at the same time, the AI might process requests differently than if there is only one user. Thus, the same input may produce different results depending on demand. This normal fact for an engineer may appear to be a mistake to the end user if it is not explained to them..

This principle of transparency in systems is not new, it predates AI and is still valid today. There is no need to hide this from users. For example, if consistency will take longer, say so clearly in messages:

- «Processing your request, this may take a few seconds...»

- «Generating a consistent response, thank you for your patience.»

- «We are verifying that the results are accurate.»

Clear communication reduces user frustration and sets the right expectations, but compromises often have to be made in the platform. Making AI deterministic (always consistent) can mean it runs slower, as more processes will have to be performed. In casual or consumer (B2C) platforms, speed and immediate output is often a priority, in enterprise (B2B) or regulated environments, reliability and efficiency are more important.

The decision to prioritise speed or reliability is not a technical one, it is a product design one.Do you want to give the answer now, even if it varies a little, or would you prefer it to take longer but always be the same?

At StepAlong, we see this on a daily basis. For example, the formatting of instructions from text or PDFs, or the consistent translation of instructions, is fundamental: both brands and customers expect the parts to always have the same names and the techniques to be adapted to the market. This is why the translation process is not immediate, as the AI must not only understand the context of each product and the nomenclature to be used, but also respect the terminology defined by each company.

Whether AI is random or deterministic is not an absolute fact: it is a design decision.

Giving users control, communicating clearly and adapting the system's behaviour to the context are the factors that build trust and generate real value.